Mapping Biases to Testing: An Inconvenient Truth

Did you know that having a personal doctor at your disposal could do more harm to your health than good? I’ll explain why. The doctor gets paid by you and with their good intentions wants to do good and be useful. So, they start to assess your bodily health. Chances are, some parameters of your health might be slightly off. The doctor prescribes you some pills to make you feel better. Nothing might seem wrong with this, but in fact, those pills can have side effects and make you feel bad in other ways. The doctor then wants to help you with that and more pills and vitamin supplements go through your system. All with good intentions, but was the first choice ever really clear? Did you really need to take the first pill to solve your slight health problem?

In case you misunderstand me, there are many cases where the use of medicine is extremely warranted, but for the cases of slight health problems, it is often not clear that people have a choice to take the prescribed pills or not. You take the advice of your doctor for granted because you pay them and you believe in their expertise. But taking medicine comes with the risk of side effects, always.

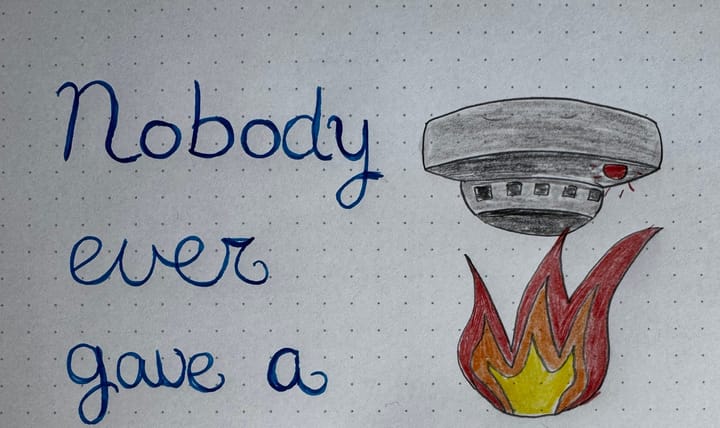

It’s the same with testing. We are the doctors in this scenario and we prescribe medicine: needs more testing. We do this with good intentions because we believe that we need to uncover what problems there are so we (the team) can fix them. We seldom ask ourselves how much of the medicine we need to prescribe.

In the case of test automation, the general tendency is to have ‘more of it’, with ‘more coverage’. We are stuck in talking about the different brands of automation medicine that are available (frameworks), but we don’t talk about the problems that it will solve. We don’t diagnose first. We rarely talk about the test techniques and test types that will drive the effectiveness of this medicine. Automating more tests comes with great responsibility: maintenance, questioning the relevance, checking for false-positives, false-negatives, etc.

In the case of exploratory testing, we pride ourselves on finding odd bugs and black swans in the application, but we don’t stop to think if all of this is relevant. In my current project, I found a bug that I thought was really annoying, but it was already in production for years and customers aren’t complaining about it, so is it really worth all the money and effort to fix it? Analytics showed that only 0.6% of the user base even touched the screen that had the possibility of the bug. Because I’m a tester I care about this stuff, but that’s because I’m biased to do so. I used to advocate hard for every bug to get solved, without thinking of how often it would occur or if it would annoy users. Now, I try to go for a data-driven approach where possible (I’m upping my analytics game this year!).

To summarise: More testing as medicine isn’t always better, but that’s a hard pill to swallow (🥁 ) when it is your profession and you feel almost compelled to be useful and do good. It’s especially hard when your intentions are good and you feel you are doing the right thing.

There’s no solution for this since the challenge is in finding out when or if you might test too much (or too much of a wrong thing). Asking yourself this question and exploring it is valuable. I don’t even think you can get one answer, but reflecting on this can get you started on a journey of finding different approaches.

Are you prescribing too much testing medicine in your project? How would you try to find this out?

Other posts in this series:

Mapping biases to testing, Part 1: Introduction

Mapping Biases to Testing: Confirmation Bias

Comments ()